Get Ready for the Internet to Double in Size (and Halve in Quality)

With AI, producing 'content' is trivially easy, so everyone will do it

This morning my friend Keith from Swim Practice sent me an audio file. In it two likeable, if slightly banal, radio hosts were discussing his writing. I was excited for a few moments until he told me that his friend had just sent the link to Google’s NotebookLM and this was an automatic AI audio overview.

Incredible.

So I did the same for Voice of Reason : just went to NotebookLM and typed in my archive page to where it said “link.” Within five minutes I had the same thing for my own substack.

It’s a delightful ego boost but not fascinating radio. It is, however, amazing. It sounds extremely human, with asides, insights and analysis that are not explicitly there on the page — even if it has a scripted Home Shopping Network feel. And this is barely 12.05am in the Artificial Intelligence day.

We are tumbling into an AI black hole. Once relegated to the pages of sci-fi novels and tech labs, AI is now reshaping how we consume and create content. There is no escaping it: I have, obviously, used AI to make sections of this article and, I assume, there is AI in the background of Substack making that work.

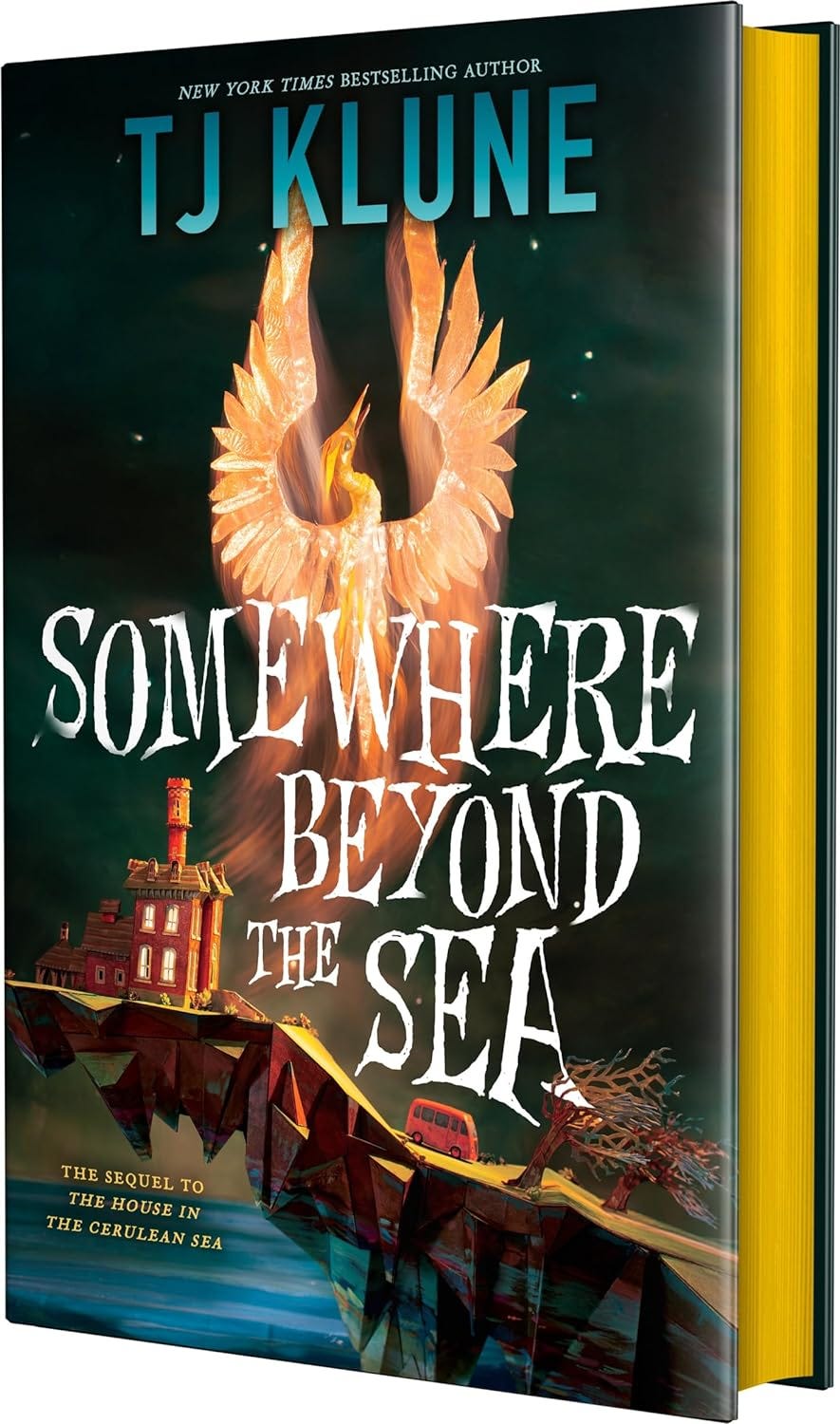

Indeed, writing a human-powered review of a human-written (I think!) novel about humans with magic but without any AI tools as I did last week for Book and Film Globe (TJ Klune’s “Somewhere Beyond the Sea”) has, in the space of a few days, become a multi-layered anachronism.

Among its most transformative and potentially troubling abilities is AI’s power to summarize information, to take exact instruction, and to synthesize human voices and texts at breathtaking speed and scale. This technology promises to make our lives more efficient and information more accessible. But there are a number of drawbacks: by reducing the cost of content production to almost zero, it threatens to inundate us with mindless “content.” As a result, it threatens to upend how we understand and trust what we read or hear.

There is constructive power in this.

Imagine, for a moment, an AI that can effortlessly turn a two-hour podcast into a five-minute read, or condense dense academic papers into clear, digestible summaries tailored to your interests. Put aside the pleasure of reading for a moment, there will be no need to sift through mountains of content because AI can do it for you, with the speed and precision that’s impossible for humans to match. It makes information easier to access, and that access is personalized, adjusting to your reading habits, favorite topics, and even preferred tone.

And AI has the potential to facilitate collaboration, democratizing content creation by lowering the barriers to entry. Complex research, creative brainstorming, and even fiction writing can be enhanced by AI's capacity to generate ideas, provide inspiration, and summarize existing material. For content creators, it’s like having a supercharged assistant ready to distill insights, generate drafts, and find connections you might have missed.

Language too can be transcended. With AI's power to translate and adapt content to different languages and dialects, knowledge can cross cultural divides more easily than ever. I like to play with that by getting things creatively wrong to foreign language speakers who know I am playing, but more most people, it’s a simple unambiguous tool. For people with disabilities or learning differences, AI-generated summaries and synthesized voices can open doors to information and engagement that were previously closed. The promise of this technology is immense—it’s hard not to get swept up in the excitement.

But behind all that potential lies a darker reality. Even in the realm of mainstream content production AI has tech experts and ethicists on edge. Summarizing content is a double-edged sword. At its best, it makes information clearer and more accessible; at its worst, it distorts, misleads, and oversimplifies. When AI summarizes content, it decides what’s important and what’s not. It strips away nuance, discards context, and often misses the very subtleties that are crucial to the message. In contradistinction to all academic rules on integrity, it is almost — or actually — impossible to trace what it has done. What you’re left with is often a distilled version that lacks depth, potentially leading to shallow understanding and, even worse, misinformation.

Another major concern is bias. The data used to train AI models reflects the world with a double skew. First of all the skew of the data it absorbs, complete with all its prejudices, inequities, and perspectives. Second the skew of the algorithm: when AI is summarizing, biases can be amplified, presenting a view of the world that perpetuates stereotypes or overlooks marginalized voices. In a world where AI-generated content becomes more ubiquitous, how do we ensure it accurately reflects the full spectrum of human experience, rather than reinforcing the biases that are either baked into its algorithms or presented to it as instructions by malign, misguided, or just incompetent users?

The risks extend beyond distortion and bias. AI’s ability to synthesize human voices is setting off alarm bells around privacy and consent. Imagine that the two people talking above are not just placeholders but voices modeled on famous newscasters, filmstars, criminals, dead family members. Or imagine your voice, tone, and style perfectly mimicked by an AI without your permission. Just in the realm of personal production — not even credit identity theft — it’s a chilling thought. There is no cost to impersonation, blurring the lines between authentic and fake, allows for impersonation, fraud, and manipulation on a massive scale.

And then there’s the issue of ownership. Who owns the content when AI synthesizes a new piece of text or voice? I happened to submit my own substack link to NotebookLM, but Keith’s friend took a section of the pay-to-read Swim Practice and put it in the NotebookLM box. Who owns that audio ripped from Keith’s mind and writing work? What happens to intellectual property when a machine just effortlessly repackages someone else's work like that. Where do we draw the line between inspiration and theft? This question isn’t just academic; it has serious implications for writers, artists, and creators whose work risks being rehashed without recognition or reward.

Even before TikTok, Twitter (X), Meta and their ilk there was pablum: Content to pad out the airwaves and stretch between the adverts. We’re on the brink of a world filled with exponentially more content than ever before. But the question remains: Will any of it be content we can trust?

Don’t Give Mr. Data Any Money

Emerging from this too, is the question — who is any of this for? Human attention is limited, human money also limited but, for the moment, humans are the only ones with money and power (albeit an ever smaller proportion of those humans). As soon as AIs can have money or power, there is no real need to produce content for humans except to keep them diverted or distracted. The networks (or new networks) can just bypass humanity and produce content for and by AIs at an unprecedented and inhuman rate.